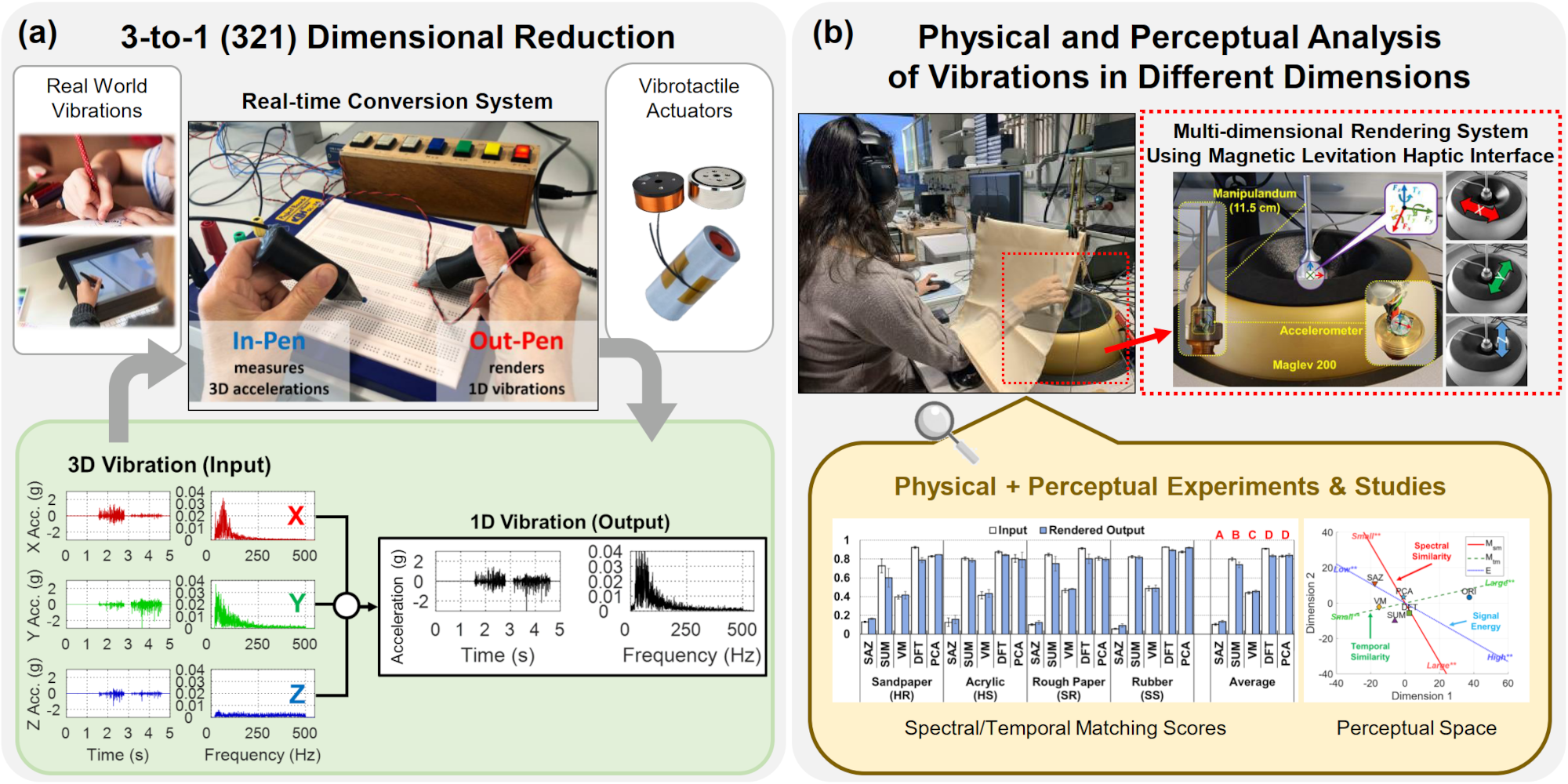

We are studying ways to reduce a real 3D vibration to a perceptually equivalent 1D vibration. (a) The concept and our real-time conversion system. (b) Our analysis of multi-dimensional vibrations using a magnetic levitation haptic interface.

Unconstrained tool-mediated interaction with a surface generates 3D vibrations that contain high-frequency accelerations in all Cartesian directions. These vibrations convey rich task information, so they need to be captured and portrayed for the user to feel in both virtual and remote interactions. To limit system cost and complexity, haptics researchers often reduce 3D vibrations into 1D signals and render them using a single-axis actuator as humans cannot easily perceive the direction of vibrations. Such three-to-one (321) reduction can be performed using many different algorithms that have rarely been compared.

This project investigates the quality of 321 conversion methods by analyzing the properties of their input and output vibrations. We established a real-time conversion system that simultaneously measures 3D accelerations and plays corresponding 1D vibrations [ ]. A user can interact with various objects via a stylus that contains a three-axis accelerometer. The captured signals are then reduced to 1D by different algorithms and rendered by a standard voice-coil actuator. Objective analysis and subjective user ratings confirmed that more sophisticated conversion methods such as DFT321 perform better than the common approach of choosing a single-axis signal [ ].

We also developed a novel multi-dimensional vibration rendering system that can accurately generate 3D vibrations using a commercial magnetic levitation haptic device. We quantitatively and qualitatively verified its performance at rendering recorded 3D vibrations [ ]. The system was then used to conduct experiments to compare human perception between the original 3D and different 1D versions of the same vibration signal. Ongoing work assesses the characteristics of all common 321 algorithms by establishing perceptual spaces [ ].

This project provides many practical results for both real-time haptic teleoperation and offline haptic processing. For instance, our findings can contribute to medical teleoperation systems by offering a simple but realistic 321 method that allows surgeons to feel vibrotactile feedback from remote surgical robots.