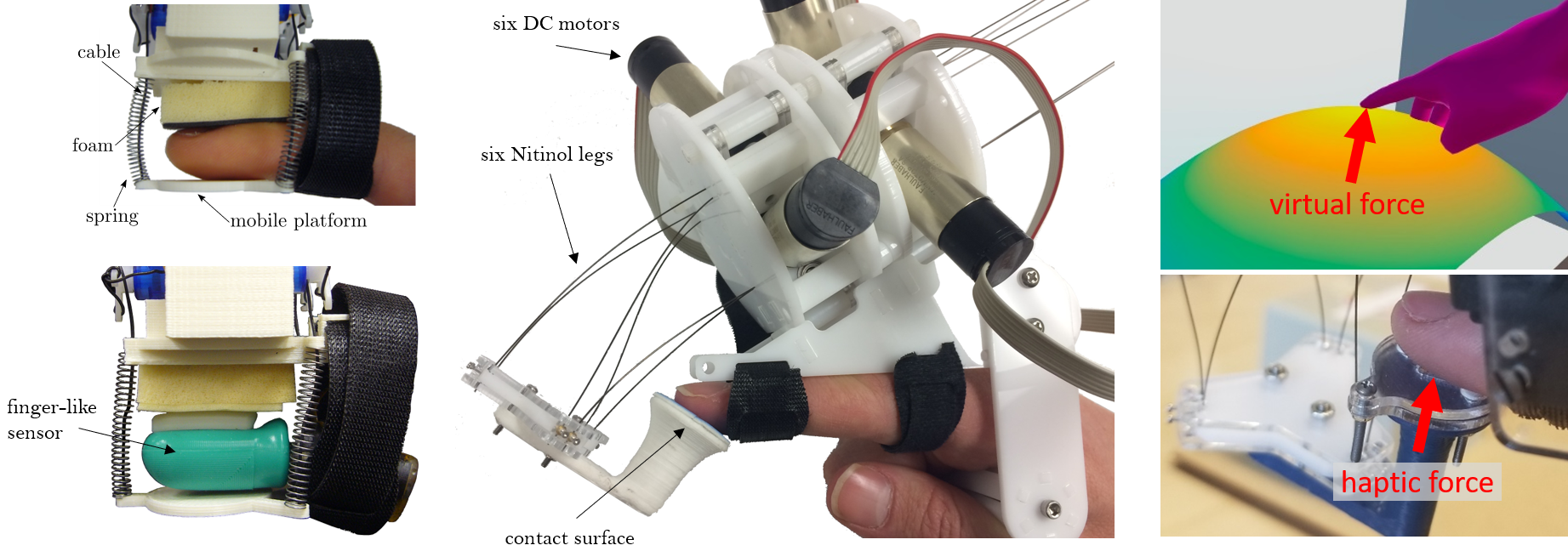

This project introduces a wide assortment of fingertip haptic devices and their rendering algorithms. Left: a three-degree-of-freedom (3-DOF) device that can render haptic sensations to a human finger (top) or a finger-like sensor for data collection (bottom). Middle: our 6-DOF fingertip haptic device that renders rich contact sensations by controlling the lengths of six elastic legs. Right: an example of a virtual interaction rendered by this 6-DOF device.

Wearable haptic devices have seen growing interest in recent years, but providing realistic tactile feedback is a challenge not to be solved soon. Daily interactions with physical objects elicit complex sensations at the fingertips. Furthermore, human fingertips exhibit a broad range of physical dimensions and perceptive abilities, adding increased complexity to the task of simulating haptic interactions in a compelling manner. Through this project (see [ ] for summary), we aim to provide hardware- and software-based solutions for rendering more expressive and personalized tactile cues to the fingertip.

We are the first to explore the idea of rendering 6-DOF tactile fingertip feedback via a wearable 6-DOF device, such that any fingertip interaction with a surface can be simulated. We demonstrated the potential of parallel continuum manipulators to meet the requirements of such a device [ ], and we presented a motorized version named the Fingertip Puppeteer, or Fuppeteer for short [ ]. We then used this novel hardware to simulate different lower-dimensional devices and evaluate the role of tactile dimensionality on virtual object interaction [ ]. The results showed that higher-dimensional tactile feedback may indeed allow completion of a wider range of virtual tasks, but that feedback dimensionality surprisingly does not greatly affect the exploratory techniques employed by the user.

It is also essential to examine how to meet the small size and low weight requirements for wearable haptic interfaces. We used principal component analysis to find the minimum number of an existing device’s actuators that are required to render a given tactile sensation with minimal estimated haptic rendering error [ ].

Finally, we have also explored the idea of personalizing fingertip tactile feedback for a particular user. We presented two generalizable software-based approaches to modify an existing data-driven haptic rendering algorithm to more accurately display tactile cues to fingertips of different sizes [ ]. Results showed that both personalization approaches significantly reduced force error magnitudes and improved realism ratings.