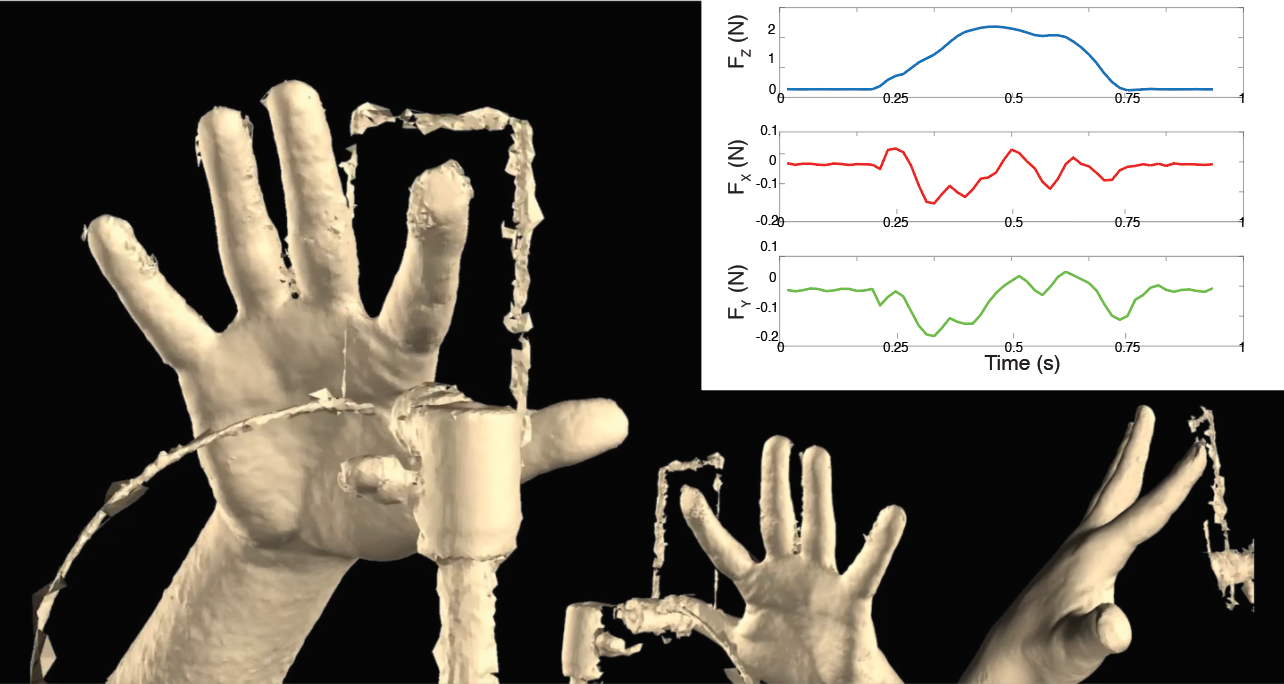

4D capture (in space and time) of a finger pressing on a 2-mm-thick glass surface mounted on a 3-axis force sensor.

Little is known about the shape and properties of the human finger during haptic interaction, as such situations are difficult to instrument. Interestingly, these parameters are essential for designing and controlling wearable haptic finger devices and for delivering realistic tactile feedback with such devices. For example, there is currently no standard model of the fingertip’s shape and its potential variations across the population. Interaction-dependent deformations have also not yet been modeled.

This project explores a framework for four-dimensional scanning (3D shape over time) and modelling of finger-surface interactions, aiming to capture the motion and deformations of the entire finger with high resolution while simultaneously recording the interfacial forces at the contact. We are currently capturing the deformations of the fingertip during active pressing on a rigid surface, which is a first step toward an accurate characterization of the shape and deformations of the physically interacting human hand and fingers [ ].

In the future, we would like to optimize the scanner configuration and capture a large number of surface-finger interactions from a range of participants that is representative of the general population. We believe that these data will enable the creation of a statistical model that simulates the natural behavior of the interacting finger.

An accurate model of the variations in finger properties across the human population could enable one to infer the user’s fingertip properties from scarce data obtained by lower resolution scanning. It may also be relevant for inferring the physical properties of the underlying tissue from observing the surface mesh deformations, as has been shown for body tissues. Further applications of this research include simulation of human grasping in virtual worlds that is consistent with our manipulation of real objects and estimation of the contact forces generated by the tactile interaction from the 4D data alone.