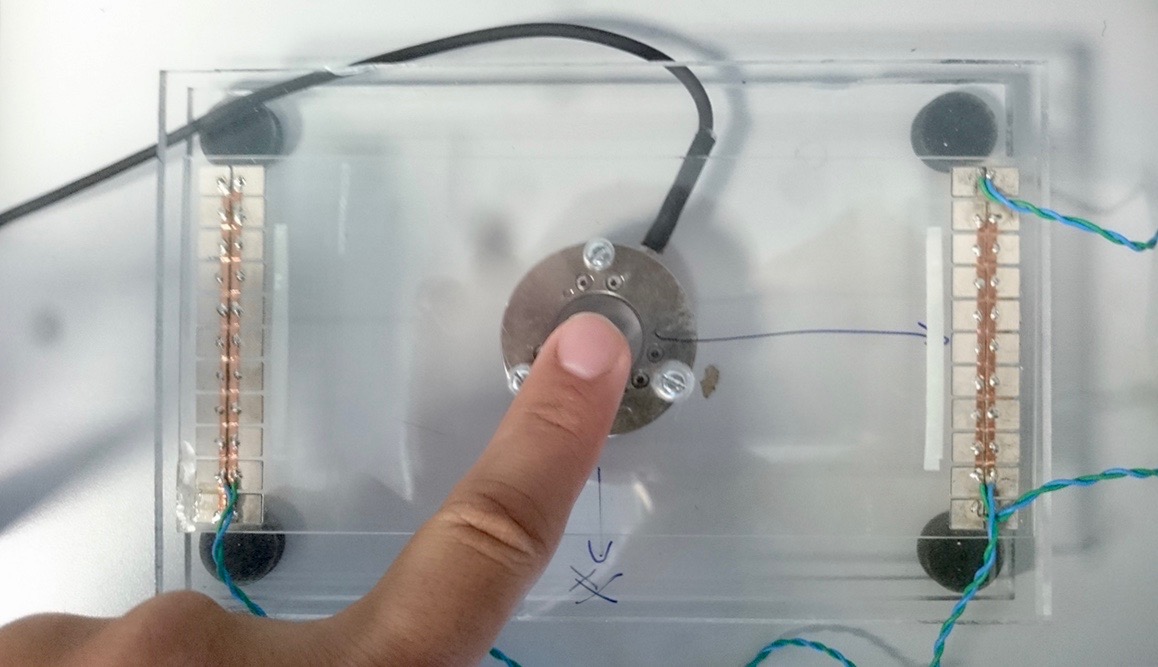

Vibrating a glass screen with high-frequency ultrasonic waves reduces the finger-surface friction during contact interactions. We can use this phenomenon to create virtual tactile elements on the flat screen, such as edges, holes and bumps. As shown in the image, piezoceramic actuators glued on the left and right sides of the screen are used to generate the ultrasonic waves.

The demand for natural haptic feedback on touch screens such as smartphones and car control panels has been rapidly growing in recent years. One such feedback technology, vibrotactile stimulation, is already incorporated into many consumer devices but provides only a general vibration sensation to the user's fingers and does not work well on mechanically grounded screens.

Friction-based tactile feedback solutions have recently been demonstrated as a good alternative actuation approach. The development of these novel interfaces has raised interest in touch-based human-machine interactions while also highlighting the need for appropriate high-fidelity strategies for tactile rendering. Such new approaches face the limitation that little is known about the sensory mechanisms that mediate human perception of frictional cues.

As shown in the figure, virtual geometric features and complex textures (e.g., buttons, edges, patterns, fabrics) can be rendered on flat screens via ultrasonic vibrations that modulate the friction force experienced by the user. However, optimizing these tactile sensations requires one to understand which components of the frictional signal are critical and how the intensity of each component should be scaled according to the dynamics of the interaction.

In this project, we investigate how ultrasonic vibration waveforms with different durations and slopes are perceived by humans, as well as what kind of high-level percepts (such as edges, bumps and holes) they generate. To that end, we physically measure the frictional patterns that the finger experiences during ultrasonic stimulation, and we then correlate these results with the verbal reports of the users.

The long-term goals of this project are to understand how to generate tactile features that are perceived unambiguously by users and to leverage this knowledge to guide the design of friction-based tactile stimuli on future tactile displays that provide haptic feedback.