2024

L’Orsa, R., Bisht, A., Yu, L., Murari, K., Westwick, D. T., Sutherland, G. R., Kuchenbecker, K. J.

Reflectance Outperforms Force and Position in Model-Free Needle Puncture Detection

In Proceedings of the Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Orlando, USA, July 2024 (inproceedings) Accepted

Mohan, M., Mat Husin, H., Kuchenbecker, K. J.

Expert Perception of Teleoperated Social Exercise Robots

In Proceedings of the ACM/IEEE International Conference on Human-Robot Interaction (HRI), pages: 769-773, Boulder, USA, March 2024, Late-Breaking Report (LBR) (5 pages) presented at the IEEE/ACM International Conference on Human-Robot Interaction (HRI) (inproceedings)

2023

Allemang–Trivalle, A.

Enhancing Surgical Team Collaboration and Situation Awareness through Multimodal Sensing

In Proceedings of the ACM International Conference on Multimodal Interaction, pages: 716-720, Extended abstract (5 pages) presented at the ACM International Conference on Multimodal Interaction (ICMI) Doctoral Consortium, Paris, France, October 2023 (inproceedings)

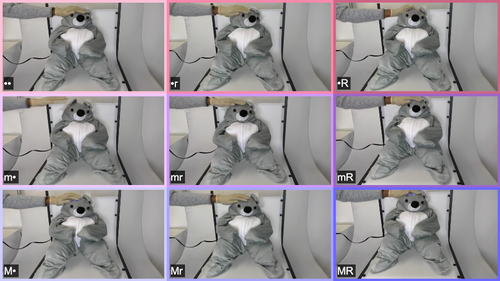

Burns, R. B., Ojo, F., Kuchenbecker, K. J.

Wear Your Heart on Your Sleeve: Users Prefer Robots with Emotional Reactions to Touch and Ambient Moods

In Proceedings of the IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), pages: 1914-1921, Busan, South Korea, August 2023 (inproceedings)

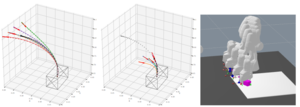

Oh, Y., Passy, J., Mainprice, J.

Augmenting Human Policies using Riemannian Metrics for Human-Robot Shared Control

In Proceedings of the IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), pages: 1612-1618, Busan, Korea, August 2023 (inproceedings)

Gong, Y., Javot, B., Lauer, A. P. R., Sawodny, O., Kuchenbecker, K. J.

Naturalistic Vibrotactile Feedback Could Facilitate Telerobotic Assembly on Construction Sites

In Proceedings of the IEEE World Haptics Conference (WHC), pages: 169-175, Delft, The Netherlands, July 2023 (inproceedings)

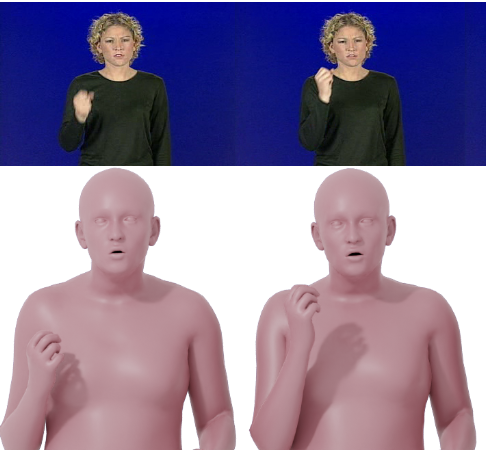

Forte, M., Kulits, P., Huang, C. P., Choutas, V., Tzionas, D., Kuchenbecker, K. J., Black, M. J.

Reconstructing Signing Avatars from Video Using Linguistic Priors

In IEEE/CVF Conf. on Computer Vision and Pattern Recognition (CVPR), pages: 12791-12801, CVPR 2023, June 2023 (inproceedings)

2022

L’Orsa, R., Lama, S., Westwick, D., Sutherland, G., Kuchenbecker, K. J.

Towards Semi-Automated Pleural Cavity Access for Pneumothorax in Austere Environments

In Proceedings of the International Astronautical Congress (IAC), pages: 1-7, Paris, France, September 2022 (inproceedings)

Allemang–Trivalle, A., Eden, J., Ivanova, E., Huang, Y., Burdet, E.

How Long Does It Take to Learn Trimanual Coordination?

In Proceedings of the IEEE International Conference on Robot and Human Interactive Communication (RO-MAN) , pages: 211-216, Napoli, Italy, August 2022 (inproceedings)

Allemang–Trivalle, A., Eden, J., Huang, Y., Ivanova, E., Burdet, E.

Comparison of Human Trimanual Performance Between Independent and Dependent Multiple-Limb Training Modes

In Proceedings of the IEEE RAS/EMBS International Conference for Biomedical Robotics and Biomechatronics (BioRob), Seoul, Korea, August 2022 (inproceedings)

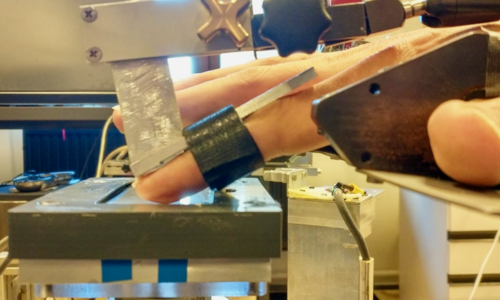

Machaca, S., Cao, E., Chi, A., Adrales, G., Kuchenbecker, K. J., Brown, J. D.

Wrist-Squeezing Force Feedback Improves Accuracy and Speed in Robotic Surgery Training

In Proceedings of the IEEE RAS/EMBS International Conference for Biomedical Robotics and Biomechatronics (BioRob), pages: 700-707 , Seoul, Korea, 9th IEEE RAS/EMBS International Conference for Biomedical Robotics and Biomechatronics (BioRob 2022), August 2022 (inproceedings)

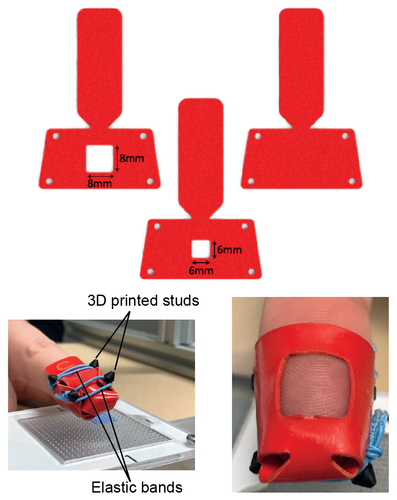

Gueorguiev, D., Javot, B., Spiers, A., Kuchenbecker, K. J.

Larger Skin-Surface Contact Through a Fingertip Wearable Improves Roughness Perception

In Haptics: Science, Technology, Applications, pages: 171-179, Lecture Notes in Computer Science, 13235, (Editors: Seifi, Hasti and Kappers, Astrid M. L. and Schneider, Oliver and Drewing, Knut and Pacchierotti, Claudio and Abbasimoshaei, Alireza and Huisman, Gijs and Kern, Thorsten A.), Springer, Cham, 13th International Conference on Human Haptic Sensing and Touch Enabled Computer Applications (EuroHaptics 2022), May 2022 (inproceedings)

Ortenzi, V., Filipovica, M., Abdlkarim, D., Pardi, T., Takahashi, C., Wing, A. M., Luca, M. D., Kuchenbecker, K. J.

Robot, Pass Me the Tool: Handle Visibility Facilitates Task-Oriented Handovers

In Proceedings of the ACM/IEEE International Conference on Human-Robot Interaction (HRI), pages: 256-264, March 2022, Valerio Ortenzi and Maija Filipovica contributed equally to this publication. (inproceedings)

2021

Thomas, N., Fazlollahi, F., Brown, J. D., Kuchenbecker, K. J.

Sensorimotor-Inspired Tactile Feedback and Control Improve Consistency of Prosthesis Manipulation in the Absence of Direct Vision

In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pages: 6174-6181, Prague, Czech Republic, September 2021 (inproceedings)

Pardi, T., E., A. G., Ortenzi, V., Stolkin, R.

Optimal Grasp Selection, and Control for Stabilising a Grasped Object, with Respect to Slippage and External Forces

In Proceedings of the IEEE-RAS International Conference on Humanoid Robots (Humanoids 2020), pages: 429-436, Munich, Germany, July 2021 (inproceedings)

Abdlkarim, D., Ortenzi, V., Pardi, T., Filipovica, M., Wing, A. M., Kuchenbecker, K. J., Di Luca, M.

PrendoSim: Proxy-Hand-Based Robot Grasp Generator

In ICINCO 2021: Proceedings of the International Conference on Informatics in Control, Automation and Robotics, pages: 60-68, (Editors: Gusikhin, Oleg and Nijmeijer, Henk and Madani, Kurosh), SciTePress, Sétubal, 18th International Conference on Informatics in Control, Automation and Robotics (ICINCO 2021), July 2021 (inproceedings)

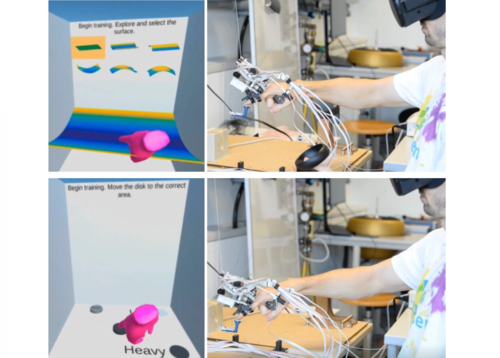

Young, E. M., Kuchenbecker, K. J.

Ungrounded Vari-Dimensional Tactile Fingertip Feedback for Virtual Object Interaction

In CHI ’21: Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, pages: 217, ACM, New York, NY, Conference on Human Factors in Computing Systems (CHI 2021), May 2021 (inproceedings)

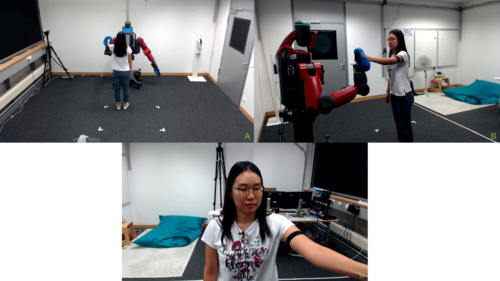

Mohan, M., Nunez, C. M., Kuchenbecker, K. J.

Robot Interaction Studio: A Platform for Unsupervised HRI

In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Xian, China, May 2021 (inproceedings)

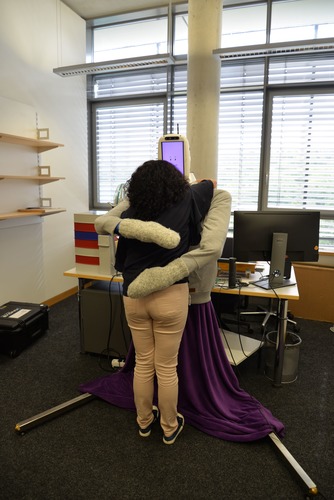

Block, A. E., Christen, S., Gassert, R., Hilliges, O., Kuchenbecker, K. J.

The Six Hug Commandments: Design and Evaluation of a Human-Sized Hugging Robot with Visual and Haptic Perception

In HRI ’21: Proceedings of the 2021 ACM/IEEE International Conference on Human-Robot Interaction, pages: 380-388, ACM, New York, NY, USA, ACM/IEEE International Conference on Human-Robot Interaction (HRI 2021), March 2021 (inproceedings)

2020

Fitter, N. T., Kuchenbecker, K. J.

Synchronicity Trumps Mischief in Rhythmic Human-Robot Social-Physical Interaction

In Robotics Research, 10, pages: 269-284, Springer Proceedings in Advanced Robotics, (Editors: Amato, Nancy M. and Hager, Greg and Thomas, Shawna and Torres-Torriti, Miguel), Springer, Cham, 18th International Symposium on Robotics Research (ISRR), 2020 (inproceedings)

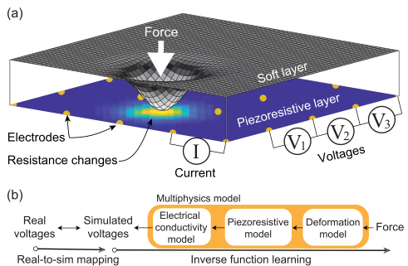

Lee, H., Park, H., Serhat, G., Sun, H., Kuchenbecker, K. J.

Calibrating a Soft ERT-Based Tactile Sensor with a Multiphysics Model and Sim-to-real Transfer Learning

In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), pages: 1632-1638, IEEE International Conference on Robotics and Automation (ICRA 2020), May 2020 (inproceedings)

Park, K., Park, H., Lee, H., Park, S., Kim, J.

An ERT-Based Robotic Skin with Sparsely Distributed Electrodes: Structure, Fabrication, and DNN-Based Signal Processing

In 2020 IEEE International Conference on Robotics and Automation (ICRA 2020), pages: 1617-1624, IEEE, Piscataway, NJ, IEEE International Conference on Robotics and Automation (ICRA 2020), May 2020 (inproceedings)

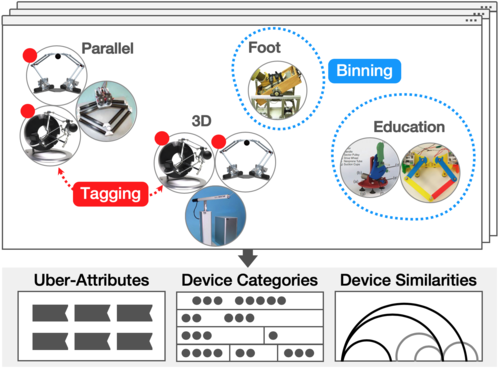

Seifi, H., Oppermann, M., Bullard, J., MacLean, K. E., Kuchenbecker, K. J.

Capturing Experts’ Mental Models to Organize a Collection of Haptic Devices: Affordances Outweigh Attributes

In Proceedings of the ACM SIGCHI Conference on Human Factors in Computing Systems (CHI), pages: 268, Conference on Human Factors in Computing Systems (CHI 2020), April 2020 (inproceedings)

Gueorguiev, D., Lambert, J., Thonnard, J., Kuchenbecker, K. J.

Changes in Normal Force During Passive Dynamic Touch: Contact Mechanics and Perception

In Proceedings of the IEEE Haptics Symposium (HAPTICS), pages: 746-752, IEEE Haptics Symposium (HAPTICS 2020), March 2020 (inproceedings)

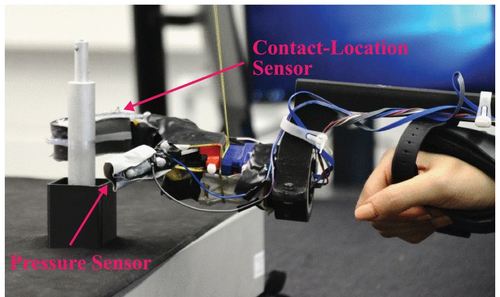

Mohtasham, D., Narayanan, G., Calli, B., Spiers, A. J.

Haptic Object Parameter Estimation during Within-Hand-Manipulation with a Simple Robot Gripper

In Proceedings of the IEEE Haptics Symposium (HAPTICS), pages: 140-147, March 2020 (inproceedings)

2019

Park, H., Lee, H., Park, K., Mo, S., Kim, J.

Deep Neural Network Approach in Electrical Impedance Tomography-Based Real-Time Soft Tactile Sensor

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), (999):7447-7452, IEEE, 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), November 2019 (conference)

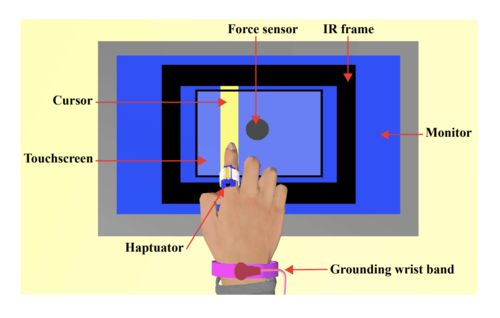

Jamalzadeh, M., Güçlü, B., Vardar, Y., Basdogan, C.

Effect of Remote Masking on Detection of Electrovibration

In Proceedings of the IEEE World Haptics Conference (WHC), pages: 229-234, Tokyo, Japan, July 2019 (inproceedings)

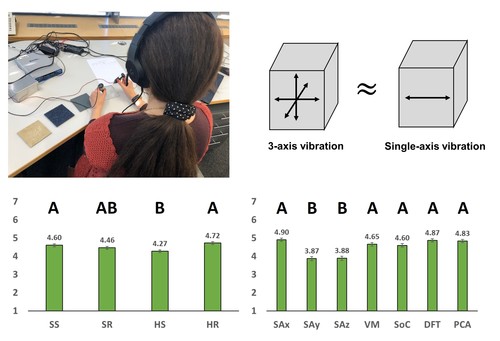

Park, G., Kuchenbecker, K. J.

Objective and Subjective Assessment of Algorithms for Reducing Three-Axis Vibrations to One-Axis Vibrations

In Proceedings of the IEEE World Haptics Conference, pages: 467-472, July 2019 (inproceedings)

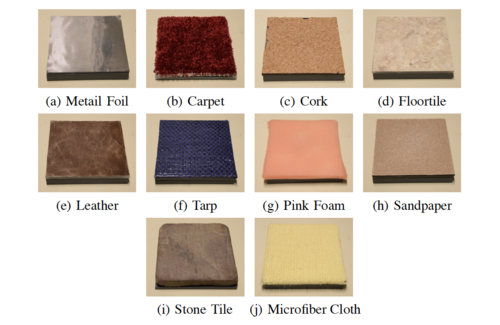

Vardar, Y., Wallraven, C., Kuchenbecker, K. J.

Fingertip Interaction Metrics Correlate with Visual and Haptic Perception of Real Surfaces

In Proceedings of the IEEE World Haptics Conference (WHC), pages: 395-400, Tokyo, Japan, July 2019 (inproceedings)

Gloumakov, Y., Spiers, A. J., Dollar, A. M.

A Clustering Approach to Categorizing 7 Degree-of-Freedom Arm Motions during Activities of Daily Living

In Proceedings of the International Conference on Robotics and Automation (ICRA), pages: 7214-7220, Montreal, Canada, May 2019 (inproceedings)

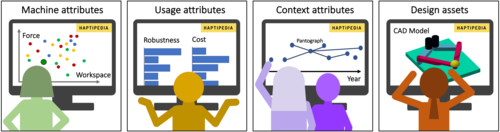

Seifi, H., Fazlollahi, F., Oppermann, M., Sastrillo, J. A., Ip, J., Agrawal, A., Park, G., Kuchenbecker, K. J., MacLean, K. E.

Haptipedia: Accelerating Haptic Device Discovery to Support Interaction & Engineering Design

In Proceedings of the ACM SIGCHI Conference on Human Factors in Computing Systems (CHI), pages: 1-12, Glasgow, Scotland, May 2019 (inproceedings)

Lee, H., Park, K., Kim, J., Kuchenbecker, K. J.

Internal Array Electrodes Improve the Spatial Resolution of Soft Tactile Sensors Based on Electrical Resistance Tomography

In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), pages: 5411-5417, Montreal, Canada, May 2019, Hyosang Lee and Kyungseo Park contributed equally to this publication (inproceedings)

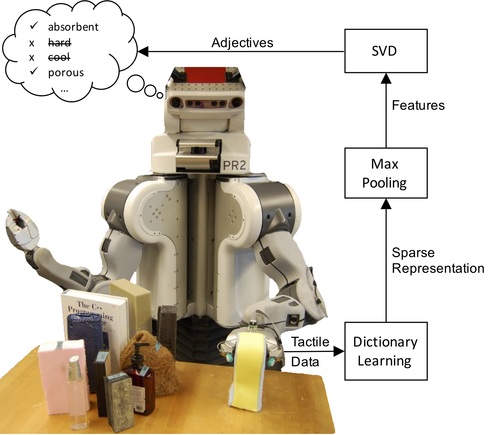

Richardson, B. A., Kuchenbecker, K. J.

Improving Haptic Adjective Recognition with Unsupervised Feature Learning

In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), pages: 3804-3810, Montreal, Canada, May 2019 (inproceedings)

Fiedler, T., Vardar, Y.

A Novel Texture Rendering Approach for Electrostatic Displays

In Proceedings of International Workshop on Haptic and Audio Interaction Design (HAID), Lille, France, March 2019 (inproceedings)

2018

Kumar, A., Gourishetti, R., Manivannan, M.

A Feasibility Study of Force Feedback using Computer-Mouse

pages: 1-6, 26th Conference of National Academy of Psychology, December 2018 (conference)

Gourishetti, R., Isaac, J. H. R., Manivannan, M.

Passive Probing Perception: Effect of Latency in Visual-Haptic Feedback

In pages: 186-198, Springer, Cham, EuroHaptics, June 2018 (inproceedings)

2017

Kumar, A., Gourishetti, R., Manivannan, M.

Mechanics of pseudo-haptics with computer mouse

In pages: 1-6, IEEE, IEEE International Symposium on Haptic, Audio and Visual Environments and Games (HAVE), December 2017 (inproceedings)

Ng, C., Zareinia, K., Sun, Q., Kuchenbecker, K. J.

Stiffness Perception during Pinching and Dissection with Teleoperated Haptic Forceps

In Proceedings of the International Symposium on Robot and Human Interactive Communication (RO-MAN), pages: 456-463, Lisbon, Portugal, August 2017 (inproceedings)

Young, E. M., Kuchenbecker, K. J.

Design of a Parallel Continuum Manipulator for 6-DOF Fingertip Haptic Display

In Proceedings of the IEEE World Haptics Conference (WHC), pages: 599-604, Munich, Germany, June 2017, Finalist for best poster paper (inproceedings)

Hu, S., Kuchenbecker, K. J.

High Magnitude Unidirectional Haptic Force Display Using a Motor/Brake Pair and a Cable

In Proceedings of the IEEE World Haptics Conference (WHC), pages: 394-399, Munich, Germany, June 2017 (inproceedings)

Brown, J. D., Fernandez, J. N., Cohen, S. P., Kuchenbecker, K. J.

A Wrist-Squeezing Force-Feedback System for Robotic Surgery Training

In Proceedings of the IEEE World Haptics Conference (WHC), pages: 107-112, Munich, Germany, June 2017 (inproceedings)

Burka, A., Kuchenbecker, K. J.

Handling Scan-Time Parameters in Haptic Surface Classification

In Proceedings of the IEEE World Haptics Conference (WHC), pages: 424-429, Munich, Germany, June 2017 (inproceedings)

Burka, A., Rajvanshi, A., Allen, S., Kuchenbecker, K. J.

Proton 2: Increasing the Sensitivity and Portability of a Visuo-haptic Surface Interaction Recorder

In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), pages: 439-445, Singapore, May 2017 (inproceedings)